In the rapidly evolving landscape of Generative AI, stagnation is the enemy. For the past two years, the industry has been enamored with Diffusion models. Stable Diffusion, Midjourney, and DALL-E have dominated the conversation, and for good reason: they are excellent at turning text into pixels.

But at Unanimous Technologies Pvt Ltd, we identified a critical plateau in standard diffusion architectures. While they excel at artistic rendering, they often struggle with genuine visual understanding. They can generate a generic cat, but they fail to understand the complex spatial relationships within a specific reference image without relying on heavy, brittle control layers.

To solve this, we undertook a significant engineering challenge: moving beyond pure diffusion to integrate Multimodal Autoregressive Transformers into our production pipeline.

This article is a deep dive into how we shifted to a hybrid architecture, the specific engineering hurdles we overcame on Apple Silicon (MPS), and why businesses need to look beyond standard APIs to build truly custom AI solutions.

The “Diffusion Plateau” and Why We Needed More

Before discussing the solution, we must define the problem. Our platform was originally built on the backbone of standard Latent Diffusion models. These models utilize a UNet + VAE (Variational Autoencoder) architecture.

The Limitation of Denoising

In simple terms, diffusion models work by learning to remove noise from an image. When you give them a prompt, they start with static and hallucinate patterns until an image forms. This works wonders for “Text-to-Image.”

However, for “Image-to-Image” workflows—which are crucial for enterprise applications like e-commerce virtual try-ons, architectural rendering, or game asset creation—diffusion models often fall short. They don’t “see” the input image; they merely use its pixel values as a mathematical starting point for noise generation.

We needed a model that could:

- Analyze an input image with the reasoning capabilities of an LLM.

- Understand complex instructions (e.g., “Make the character in this specific pose hold a red apple instead of a blue sword”).

- Generate consistent outputs based on that semantic understanding.

This search led us to the next generation of Vision-Language Models (VLMs)—models that don’t just denoise, but think in pixels.

The Engineering Implementation

At Unanimous Technologies, we don’t just wrap APIs; we build custom pipelines. Integrating a massive parameter transformer alongside a diffusion pipeline into a cohesive backend required significant refactoring.

Here is a look at our implementation strategy.

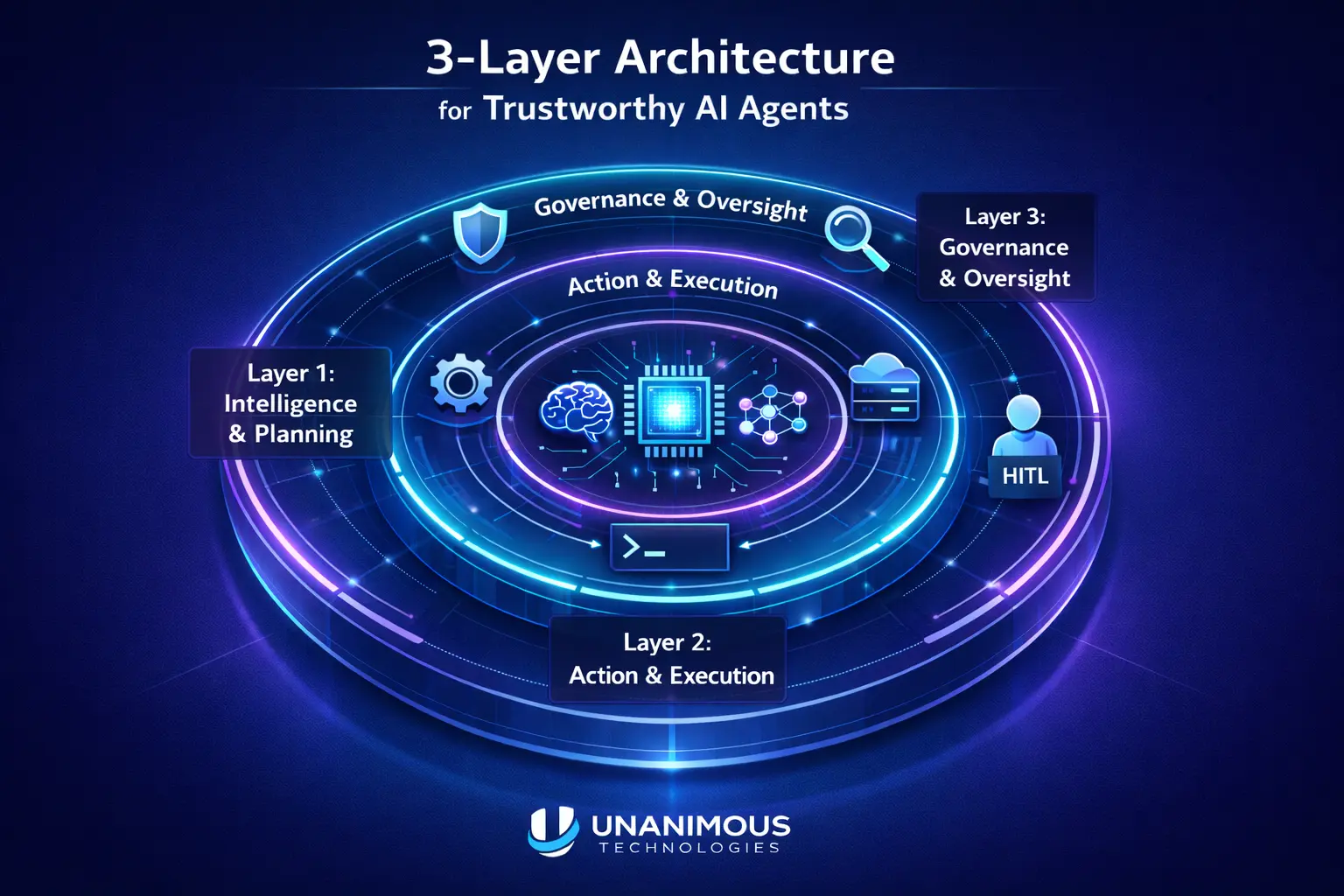

1. The Polymorphic Engine

Our existing backend was tightly coupled to standard diffusion libraries. To support multimodal transformers, we had to abstract our generation logic. We implemented a Factory Pattern that allows the system to swap neural architectures dynamically at runtime.

Conceptual Code Structure:

Python

class ModelInterface(ABC):

@abstractmethod

def load_model(self):

pass

@abstractmethod

def generate(self, prompt, image=None):

pass

class DiffusionEngine(ModelInterface):

def load_model(self):

# Loads standard UNet components

self.pipe = StableDiffusionPipeline.from_single_file(…)

class MultimodalEngine(ModelInterface):

def load_model(self):

# Loads the Autoregressive Transformer

self.model = AutoModelForCausalLM.from_pretrained(path, trust_remote_code=True)

self.tokenizer = self.processor.tokenizer

This polymorphism allows our frontend to remain agnostic. The user simply selects a model capability, and the backend handles the massive architectural switch invisibly.

AI Image Generator

Generated Images

2. Memory Management on Apple Silicon (The Hard Part)

This was the most technically demanding aspect of the project. Running high-end AI models usually requires enterprise-grade GPUs. However, we optimized our local development and edge-deployment workflows for Apple Silicon (M-Series chips) using Metal Performance Shaders (MPS).

The VRAM Constraint:

Multimodal transformers are heavy. They often require 14-16GB of VRAM to run at reasonable precision. Standard diffusion models require about 6-8GB. Attempting to keep both loaded in memory simultaneously crashes even high-end machines immediately.

The Solution: Aggressive Garbage Collection

We developed a custom memory manager that acts as a traffic cop for the GPU.

- State Detection: When a request comes in, check which model is currently in VRAM.

- The Purge: If a switch is needed, we don’t just overwrite variables. We explicitly invoke Python’s garbage collector and empty the MPS cache to prevent memory fragmentation.

- Precision Tuning: We utilized bfloat16 (Brain Floating Point) where possible, but had to fall back to float16 for MPS compatibility, requiring careful error handling to prevent numerical underflow (NaNs).

Python

import torch

import gc

def offload_model(model):

#Aggressively clears GPU memory for architecture switching

del model

gc.collect()

torch.mps.empty_cache() # Critical for Apple Silicon

3. Handling The “Token-by-Token” UX

User experience is paramount. In a Diffusion model, the user sees a noisy blur that slowly sharpens. In an Autoregressive model, the image generates distinct blocks, usually from top-left to bottom-right, similar to how an LLM writes text.

We rewrote our WebSocket communication layer. Instead of sending “step progress,” we implemented a streaming decoder that sends decoded image fragments in real-time. This ensures the user isn’t staring at a blank screen while the transformer “thinks.”

Performance Benchmarks: Speed vs. Intelligence

Honesty is key in engineering. While Multi-modal Transformers are powerful, they are not a replacement for everything. We conducted rigorous internal benchmarking to determine the best use cases for our clients.

| Feature | Standard Diffusion | Multimodal Transformer |

| Inference Time | 3–6 Seconds | 30–50 Seconds |

| Best For | Fast iteration, style transfer | Complex instruction following, reasoning |

| Reasoning | Low (Pixel-based) | High (Semantic-based) |

The “Understanding” Gap

We ran a test: Provide a rough sketch of a dashboard UI and ask the AI to turn it into a high-fidelity React design.

- Diffusion: Generated a generic futuristic screen. It ignored the specific button placement of the sketch because it treated the sketch as noise guidance.

- Multimodal Transformer: Recognized the text “Login” in the bad sketch, understood the layout was a “login form,” and generated a UI that perfectly matched the structural intent of the drawing.

This is the business value. It allows us to build tools where the AI acts as a collaborator that understands intent.

Why Custom Integration Matters for Your Business

Why did Unanimous Technologies go through the trouble of hosting this locally instead of just paying for public APIs?

- Data Privacy & Security: Many of our clients operate in Fintech and Healthcare. Sending proprietary data to a third-party public API is a compliance violation. By engineering our own pipeline, we ensure zero data leakage.

- Cost Control at Scale: API costs scale linearly. By optimizing open-weights models on our own compute, the unit economics improve drastically as volume increases.

- Steerability: Public APIs are “black boxes.” By owning the pipeline, we can fine-tune models on specific datasets—your brand guidelines, your art style, or your industry’s terminology.

Partner with Unanimous Technologies

The AI landscape changes weekly. What was state-of-the-art last month is obsolete today. The “plug-and-play” API solutions often lack the nuance, privacy, and control that serious businesses require.

At Unanimous Technologies Pvt Ltd, we don’t just read the research papers; we implement them. We solve the hard engineering problems—memory optimization, latency reduction, and custom architecture—so you can focus on your business logic.

Whether you are looking to build a generative design tool, an intelligent visual analysis system, or a custom AI backend, you need a team that understands the code behind the magic.

Contact Unanimous Technologies Today to discuss your custom AI roadmap.